Google is determined to level the playing field, reward good content and punish the cheaters. How will they achieve this?

There is plenty going on at Google at the moment but let’s highlight three key elements relevant to this article, even though they may not be as obvious at first:

- Improved spam detection

- Knowledge graph

- Social Graph

Improved Spam Detection

Web spam and aggressive SEO tend to have a domino effect due to lack of ‘justice’ and equal treatment of all websites by Google. If one webmaster gets away with cheating the others will follow. In 2010 we wrote about this in detail: Downward Spiral of Questionable SEO Practices and Why White Hat SEO Struggles to Survive.

This year SXSW featured a panel named “Dear Google & Bing: Help Me Rank Better!” with Danny Sullivan (Search Engine Land), Duane Forrester (Bing) and Matt Cutts (Google).

During the panel discussion Matt Cutts announces an upcoming change within Google’s algorithm. The change is all about catching overly aggressive SEO signals and taking action against affected pages:

“We are trying to level the playing field a bit. All those people doing, for lack of a better word, over optimization or overly SEO – versus those making great content and great site. We are trying to make GoogleBot smarter, make our relevance better, and we are also looking for those who abuse it, like too many keywords on a page, or exchange way too many links or go well beyond what you normally expect.”, Matt Cutts

You can download the recording of the session as an mp3.

What elements might Google be after?

- Keyword stuffing

- Thin content and SEO themed pages (e.g. entire page dedicated to car rental + postcode).

- Automated link exchanges

- Open invitations to game the system (on-site, via email, posted externally)

- Link buying

- Anchor text over-optimisation (excessive percentage of commercial terms in backlink profile)

- Aggregating and scraping duplicate content for SEO reasons (inserting own links in press releases)

- Unnatural links (spam, article submission, blog networks)

- Microsites and own domains to gain extra popularity in search engines

- “Clever” and “creative” but sneaky tactics (including anchor text based widget distribution)

It’s a bit surprising they are announcing this considering that they did take action against many of these schemes already. Perhaps this time they have an algorithm which can determine over-optimisation with a greater degree of certainty.

As usually we expect all borderline cases to receive warning emails prior to any filters and penalties being applied. Best thing to do now is to scan Google Webmaster Forums in the next 2-4 weeks.

Knowledge Graph

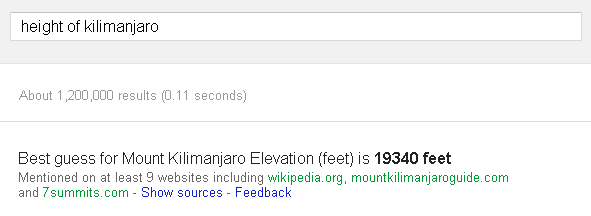

Google engineers are not satisfied with the quality of their search algorithm and admit that their search engine is not that good at truly understanding the user query.

Where they want to be is in a place where user asks a question and gets a solid answer instead of many choices. This is of course applicable in only certain situations and some elements of that are already visible in search: Conversion, mathematics, schedules, technical data and facts.

The Wall Street Journal, published an article titled “Google Gives Search a Refresh” featuring Amit Singhal (Google) giving us a glimpse of a direction the world’s biggest search engine is taking. Prior to that interview Singhal was also featured in Mashable where he talked about Google’s ‘knowledge graph’ and the impact it could have on search.

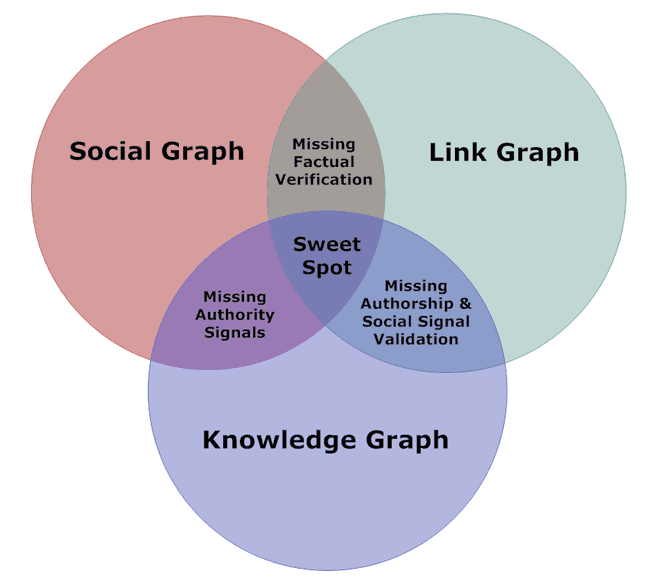

Rather than re-inventing the wheel Google found an interesting acquisition target “Freebase” and knew what to do with all that data. Freebase is basically a third addition to the “graph” entity at Google:

This acquisition may seem like a quick hack, but Google assures us that they’re taking the linked data they have much further and using the Freebase knowledge graph as the initial seed to create much larger knowledge collection of interconnected entities and their various attributes. Google grew Freebase database from 12 to 200 million entities and continues to invest heavily into its growth and development.

Social Graph

Google has unified and merged many of its services and as part of that we can expect to see their growing social graph to start making greater impact on search results.

This change will not only manifest through explicit means such as Search Plus Your World and Google+ search but will find its way into classic search results where it will represent a meaningful signal and a ‘validator’ of link and knowledge graph blend. Part of this mix will naturally be authorship signals and their own internal “AuthorRank” for bloggers, authors and journalists, or “PersonRank” in context of typical social interactions.

The key to social graph is of course it’s structure and our connectivity with other nodes (people) within the social graph. Google calculates connectivity on two levels: Implicit & Explicit. This means that they use both our own ‘declared’ connections and the ones we form unknowingly through social interaction, sharing, +1’ing and commenting on Google+.

Your Thoughts

We would love to hear what you think will be the biggest changes in Google in 2012. Please leave your ideas in the comments below or on Google+ discussion about this article.

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211

nice information and i hope website which work very hard get good results.

Knowledge Graph will be interesting too see the full roll out, social and content are key elements for 2012 imo. Over done link strategies and spam will hopefully get hit.

Hello Dan, Thanks for the beautiful article. I had some knowledge on other things accept knowledge graph. It something very hot once it comes out fully. Lets see how can we make the wold better along with Google….

When Google launched social engagement metrics in Google Analytics, followed by +1 metrics in Google Webmaster Central, you could read between the lines in terms of the value of a +1. As you rightly said in your first point, +1’s will become more and more critical as Google exerts its dominance in the world of social search.

When Google launched social engagement metrics in Google Analytics, followed by +1 metrics in Google Webmaster Central, you could read between the lines in terms of the value of a +1. As you rightly said in your first point, +1’s will become more and more critical as Google exerts its dominance in the world of social search.

Google admits that they don’t understand user query intent? That’s interesting since Larry Page, Google co-founder, says, “the perfect search engine understands exactly what you mean and gives you exactly what you want.” Guess they’ve got a ways to go.

Google admits that they don’t understand user query intent? That’s interesting since Larry Page, Google co-founder, says, “the perfect search engine understands exactly what you mean and gives you exactly what you want.” Guess they’ve got a ways to go.

You might also argue that the so called algorithm improvements for targeting web spam could also encourage a whole new wave of black hat SEO goers dedicated to the dark art of “negative SEO”. It now appears Google has made it easier than ever to effectively target and remove your competitors from the search results by doing exactly what used to work to get websites ranking.